Real-life application of Artificial Intelligence for ECG analysis

AI systems powered by deep learning have been developed for various healthcare applications in the past years. In the field of electrocardiography (ECG), deep learning has revolutionized the way machines interpret signals. This technological breakthrough has allowed for the first time to capture the atrial activity and link it with the ventricular activity, thereby reproducing the human expert’s interpretation behaviour.

In this article we will demystify some of the major concepts behind deep neural networks, illustrate how deep learning applies to ECG analysis to outperform traditional ECG analysis, and finally why AI has the potential to transform cardiac diagnostics and relieve healthcare systems from an increasing pressure on demand.

A few basics on AI, ML and DL

Nowadays, we hear about artificial intelligence (AI), machine learning (ML) and deep learning (DL) constantly and for a variety of applications. One can easily get confused with those terms, and while they are related, it is important to understand the differences between them.

The term artificial intelligence was first used in the 1950s by John McCarthy to describe self-learning machines¹. Generally speaking, AI refers to intelligent machines and programs able to perform human-like tasks such as image or speech recognition. Machine learning is a subset of AI, which appeared much later in the 1980s, and refers to the ability for a program to figure out patterns from data on its own, without being explicitly taught rules.

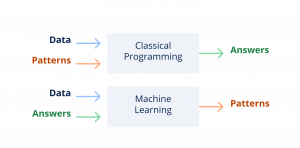

Whereas classical programming implies creating an algorithm with rules and sending a data input to get an answer, machine learning algorithms are created by feeding input data and answers (annotated data) into a program, and letting the program create its own interpretation patterns.

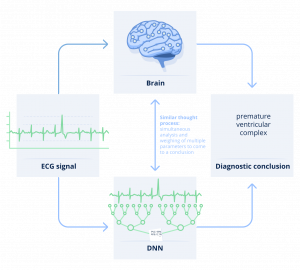

In the past two decades, deep learning was developed as yet another subset of machine learning techniques. Deep learning is defined by the use of algorithms called deep neural networks (DNN), which are loosely modeled after the human cerebral cortex, and specifically designed to recognize patterns from large amounts of data and solve problems involving complex reasoning.

In a nutshell, deep neural networks learn by themselves how to interpret data by analyzing vast amounts of data and coming up with their own interpretation patterns. Although the programmer is able to control the data that is fed into the DNN to train it, as well as the performance of the DNN by comparing its output to reality, the functioning of neural networks involves thousands of computations which are hard to describe simply with a limited set of rules.

This mystery around how the algorithm draws conclusions is what is commonly referred to as the “black box”. This can be perceived as a key barrier to adoption of AI systems, as it makes it hard to understand why and how an algorithm is drawing conclusions. In healthcare in particular, clinicians need to grasp the reasoning behind a recommendation to be able to trust it and facilitate patient engagement.

In this article, we show that there are many things which can be explained about DNNs, shading light on their behavior and performance but also limitations to better grasp why they perform so well in some situations and why they can also sometimes fail. One of the key aspects of deep learning is that it relies on training a neural network to perform like a human and recognize patterns in images or data. There are several types of training mechanisms, but the most common one is supervised learning. More specifically, the DNN needs to be first fed with vast amounts of data annotated with regards to the specific question that the DNN needs to answer. Then, the algorithm needs to be validated and tested on separate datasets, in order to control and validate its performance without bias.

It is important here to understand the meaning and implications of two words: vast amounts of annotated data. Vast because similarly to a human expert, the algorithm needs to acquire a lot of experience to be able to create its own interpretation patterns and differentiate in the end one pattern from another one. The data must not only be vast, it must also be representative of real world data. If we want to be able to recognize various types of abnormalities for instance, then we’ll need all of those to be available in enough quantities in the training dataset.

Second, the data needs to be annotated, meaning that each ECG strip is labeled according to pre-specified abnormalities, so that the algorithm may discern what characteristics of ECGs are associated with any given type of arrhythmia.

As a conclusion, unlike traditional rule-based algorithms, machine learning algorithms have the ability to keep learning and improving as more and more data, with accurate corresponding expert interpretations and outcomes (“annotations”), accrue. This is why the DNNs are regularly re-trained (and validated) with new labeled data, in order to continue improving their performance. In a word, they usually will get better with experience, like humans do!

Deep neural networks applied to ECG analysis

Now, let’s dive into how this applies to analyzing an ECG signal with deep neural networks. Despite ECGs being one of the most common cardiac diagnostic tests that enable cardiologists to diagnose a variety of pathologies, ECG signals are quite complex to analyze, as they encompass subtle details and complex interactions. This makes ECGs a good use case for analysis with deep neural networks.

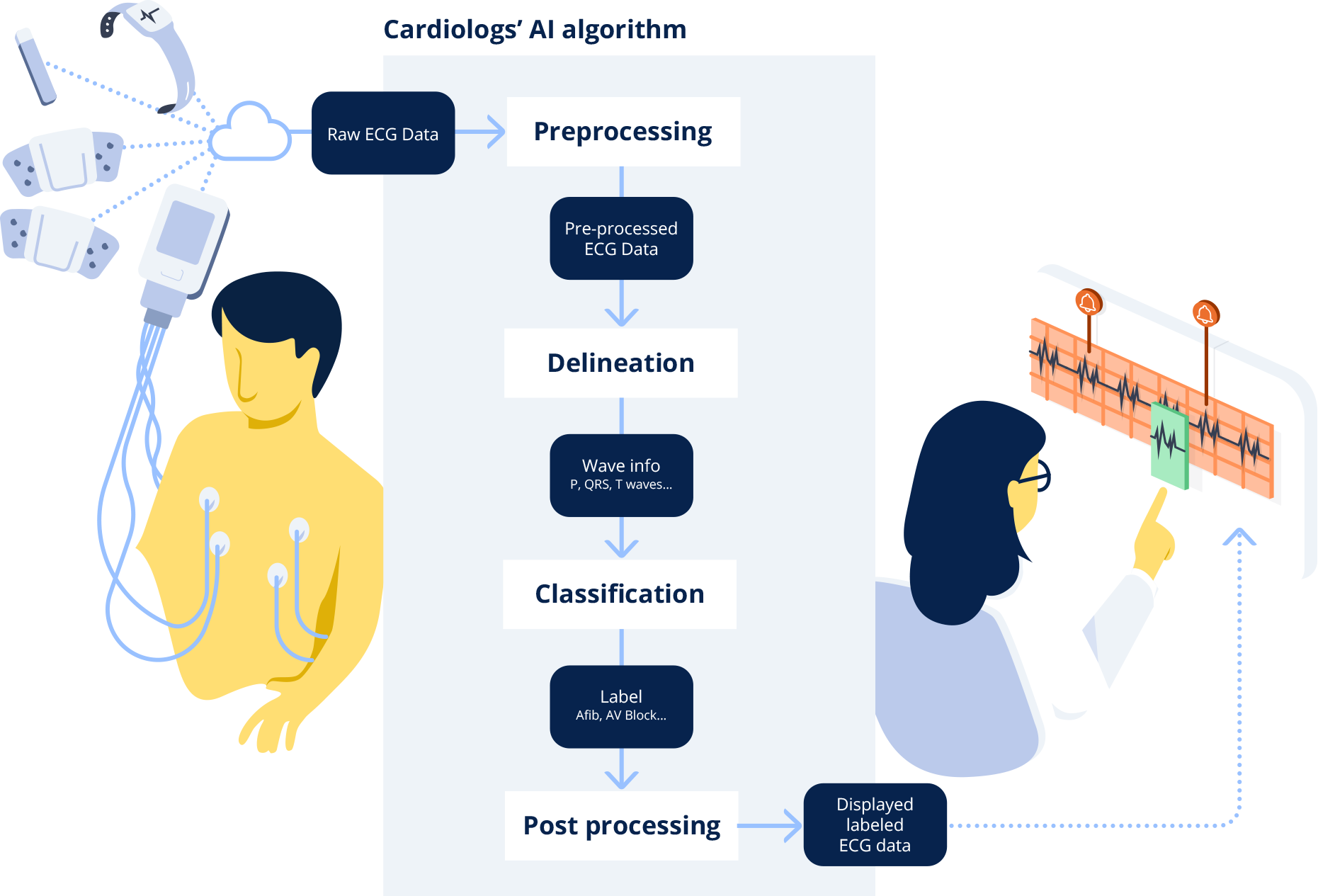

The Cardiologs Platform² proposes a novel approach to ECG analysis with an easily interpretable machine learning-based AI algorithm, combining deep neural networks.

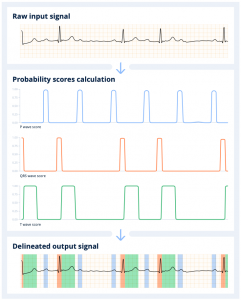

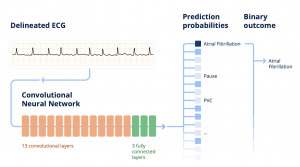

When a clinician uploads an ECG into the Cardiologs platform, the signal first goes through what is called a delineation network, which consists in segmenting the different electrical waves (P, QRS, T) in the signal. This delineation of the electrical waves provides clinical insights into the algorithm’s thinking. This representative map is then fed through a classification network, which analyzes the map to detect abnormalities in the ECG signal, and present them to the clinician.

When the Cardiolog’s AI engine receives the ECG, it first scans it to detect the beats, and segments the signal into the different electrical waves (P, QRS, T) that compose it. The algorithm computes the probability of presence of each type of wave along the signal. Any given portion of the signal above a 0.5 probability threshold is detected as part of the wave. The final output consists in the initial ECG signal annotated with the P, QRS and T waves identified and positioned by the delineation algorithm.

The delineation network is trained using internal databases where the three types of waves (P, QRS, T) have been annotated. The DNN creates its own set of rules and is then able to learn to detect the presence of one or the other wave in a given strip. The power of the DNN is that by looking at the signal as a whole, it learns on its own that most of the time QRS waves are preceded by P waves, but not always. Similarly, it understands that a QRS wave is most often associated with a subsequent T wave.

Afterwards, the Cardiologs’ classification network evaluates the delineated ECG map to detect abnormalities in the signal. The classification network predicts probabilities for the presence of each main type of abnormality in ECG strips. What’s special with our classification algorithm is that we use a single model to predict the presence or absence of all labels simultaneously and interpret the entire ECG rhythm³. This enables the model to take into account the dependence between pathologies.

The Cardiologs® classification network is built on a specific type of DNN, called convolutional neural network, which all together contains about 4 M parameters. In the end, from this predicted diagnosis, a binary outcome is calculated according to what is being studied, for instance: is atrial fibrillation (Afib) present or not in this episode?

The final post-processing step is to reconcile and aggregate the information from all of those analyzed strips and to provide the user with a comprehensive overview of the signal.

The Cardiologs’ classification DNN has initially been trained using a very large data set of ECGs individually annotated by expert cardiologists. To put this into perspective, if an individual were to review the Cardiologs training dataset , it would take them over 30 years to build up the same experience as the machine learning algorithm. Overall, more than 20 millions of ECGs have been processed by the Cardiologs algorithms, worth more than 3000 years of ECG signal⁴.

How does deep learning compare with traditional algorithms for ECG analysis?

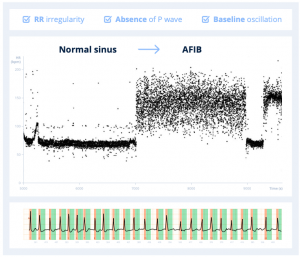

An ECG signal is interpreted by a human as a representative map of how an electrical wave is conducted in the atria and ventricles of the heart. For example, to detect atrial fibrillation, one learns to look at criteria such as RR irregularity, the absence of P wave, as well as baseline oscillation.

In order for an algorithm to be able to interpret the ECG trace, that trace needs first to be translated into a data representation, e.g. converted into numbers, that a program can read. Only then can the program provide an answer based on that data representation using defined rules or criteria. In the past, computer programs have traditionally used handcrafted methods to perform both the data representation as well as the decision criteria, which has limited their ability to perform as well as humans in some situations.

If we use the example of atrial fibrillation, traditional handcrafted algorithms are limited in their ability to convert an ECG trace into a rich data representation, because they cannot easily capture the absence or presence of P waves. Detection of P-waves is challenging for traditional algorithms, because of their low signal to noise ratio, their possible overlapping with T-waves, as well as their absence and variability due to some specific morphologies or diseases.

The most commonly used method for delineation in traditional ECG analysis solutions is called the wavelet analysis. The usual process involves identifying QRS complexes first (the largest in amplitude), then P-waves (generally the smallest in amplitude), and finally the T-waves are deduced. This simplified approach fails to identify multiple P-waves and “hidden” P-waves.

Therefore, traditional algorithms miss a key factor for understanding the signal, and limit both their representation and criteria to the remaining elements, such as the RR interval variability. The approach described by Sakar et al. in 2008⁵ is an example of a handcrafted approach both to the data representation and decision criteria for atrial fibrillation detection.

The second typical limitation of traditional ECG algorithms is related to the classification methods. ECG analysis algorithms are traditionally conventional instructional algorithms, meaning they produce rule-based automated interpretations (“if, then”). They work like decision trees based on a limited and pre-defined number of rules.

Identifying abnormalities in most algorithms is performed using rules based on temporal and morphological indicators computed using the delineation, such as the PR interval, RR interval, QT interval, QRS width, level of the ST segment, slope of the T-wave, etc. But oftentimes, those algorithms⁶ are crude simplifications and do not reflect the way cardiologists analyze the ECGs, because they’re unable to handle as many parameters or take into account complex conflicting information.

Example of predefined sets of rules for classification of abnormalities in traditional ECG algorithms

They don’t capture the complexity of the signal as a whole, because they don’t look at the signal like a human would, taking into account complex relationships between parameters. If we take the example of the AV block, the key element to detecting an AV block in an ECG signal is understanding whether P waves conduct a QRS wave or not. P waves and QRS waves may well both be present, but if they’re not correlated and the former does not conduct the latter, then one may be facing an AV block. Unlike DNNs, traditional solutions are not good at recognizing AV blocks for instance, because they always look for the P wave before the QRS signal, whereas a DNN has no “a priori” and accepts that they may overlap and actually be disjointed.

Lastly, on one hand, those algorithms are optimized to avoid missing abnormalities during classification. Their parameters and rules are created in such a way that they are very sensitive. But on the other hand, they lack specificity, creating a lot of false alarms which are a burden to manage. In a study on Implantable Cardiac Monitors, Dr. Suneet Mittal showed that such devices created a huge amount of false positive Afib episodes, and demonstrated that up almost 70% of those false alarms could be eliminated with the use of one of our DNN algorithms⁷. On the other hand, if physicians don’t filter false alarms, patients may end up being treated for a condition they don’t actually have. Dr. John Mandrola commented on the bias induced by 12L ECG machine algorithms, describing cases where patients were anti-coagulated following an overdetection of Atrial Fibrillation⁸ and suffered from bleeds as a consequence of that treatment.

In the past decade, machine learning has been applied to ECG analysis to try and improve algorithms performance in the detection of arrhythmia, in particular atrial fibrillation. In 2013, Colloca et al. introduced an incremental approach towards machine learning by using handcrafted data representation, but machine-learned decision criteria⁹.

The Cardiologs approach introduced in 2016¹⁰ is the first fully automatic approach, where both the data representation and the decision criteria are machine-learned, and not hand-crafted. Some algorithms use advanced machine learning techniques for finding individual abnormalities such as atrial fibrillation, but are limited to one abnormality at a time, whereas the Cardiologs® algorithm uses DNN technology to learn from, and interpret, the whole ECG, thus handling multiple abnormalities at once.

Our full deep learning approach enabled for the first time to successively use RR irregularity as well as P-wave morphologies in combination, and thereby surpass state-of-the-art performance.

The future of electrocardiography lies within AI-assisted analysis

Healthcare professionals are facing an epidemic of patients with cardiological diseases requiring ECG diagnostics, such as Atrial Fibrillation, due to growing prevalence of risk factors and ageing population.

The volume of ECG data to analyze for diagnostics will further explode in the years to come with the advent and rapid adoption of sensors and wearables, such as smartwatches. The ongoing trend towards longer monitoring periods, which has been demonstrated clinically to bring benefits in terms of detection performances, puts pressure on healthcare systems to find cost-effective solutions.

This combined to the declining number of cardiologists in several regions of the world will contribute to a bottleneck in patient diagnosis and treatment.

The cardiology diagnostics market needs transformation to be able to face these challenges. In order to provide the best of diagnostic care to patients and ensure optimal clinical outcomes, the market needs to transition towards scalable and cost-efficient diagnostic solutions, including longer recordings with automated analysis.

AI carries the promise of a future where patient access to the best of healthcare is universal, where caregivers are liberated from mundane technical and administrative tasks, and where health systems are able to face the economic pressure of an ageing population. We at Cardiologs aim to make cardiology diagnostics scalable and accessible to everyone, and provide tools for clinicians and service providers across the globe to face the increased demand for cardiological diagnosis. If implemented successfully, machine learning will improve quality, reduce the burden of cardiac data analysis and increase the capacity of the healthcare system to deliver more and improved patient care.